Web Crawler Connector#

Profile: Project Creator

This page provides an overview of the Web Crawler connector.

Project creators use this connector to crawl websites, converting web pages to PDFs and indexing them within their Squirro project.

How to Crawl a Website#

Open your Squirro project.

Navigate to the Setup space.

Click the Data tab. By default, you will be on the Data Sources page, as shown in the example screenshot below:

Click the orange plus sign (+) in the top-right corner of the page to add new data.

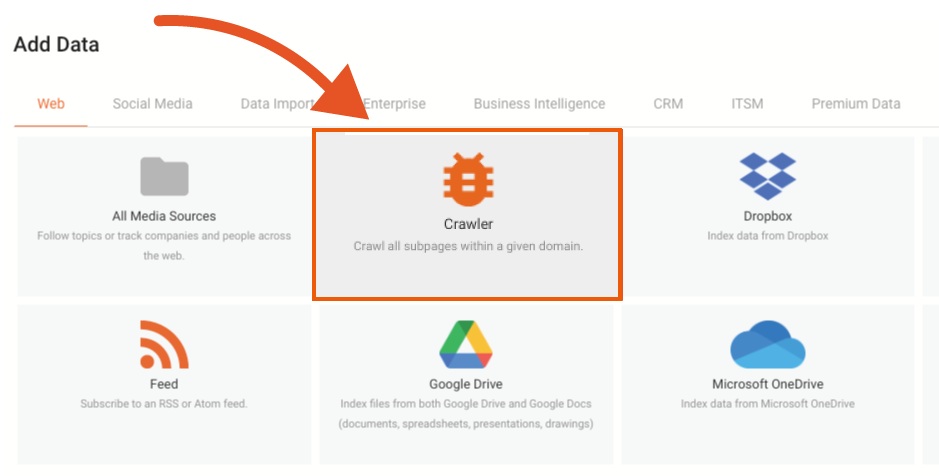

Click the Data Import tab.

Select the Web Crawler.

Configure the settings described in the sections below and add the data source to your project.

Configuration Options#

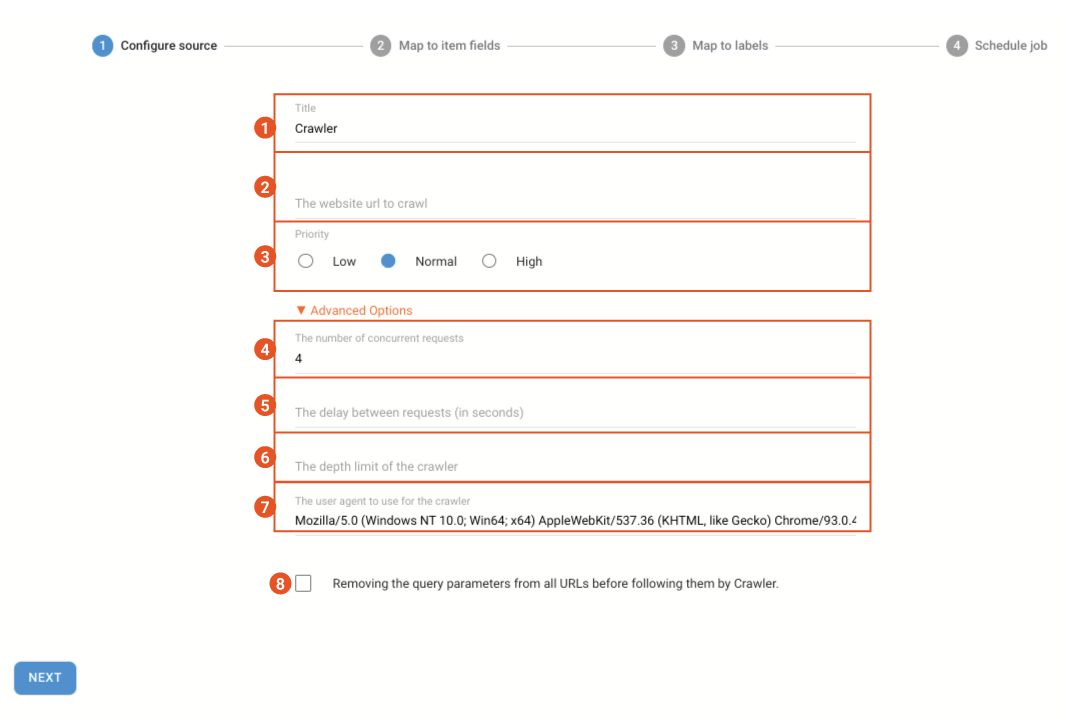

The Web Crawler data loading app includes the following configuration options, shown in the example screenshot below and explained in the associated table:

Enter the title of the data source as it will appear in your project dashboard.

Enter the URL of the website you want to crawl, starting with

https://, for example,https://www.squirro.com.Select the data-processing priority for the data source. The default is Normal.

Select the number of concurrent requests sent to the site. If you are crawling a large site, you can increase this number to speed up the process, though this will cause additional load on the website server end. The default is 4 concurrent requests.

Select the delay between requests, in seconds. The default is 0.5 seconds.

Select the depth limit of the crawl, explained in the section below. The default is 0, which crawls the entire site.

Identify the user agent to use for the crawler. Squirro recommends keeping the default user agent, but for advanced users, you can specify a user agent here.

Remove query parameters. For sites with pages built from query parameters (e.g. e-commerce sites) you can choose to remove query parameters.

Troubleshooting#

If you are having issues ingesting data into Squirro using the web crawler, see Data Loading Troubleshooting or contact Squirro Support.